Details

Ultra-efficient deep learning in scale-out servers

In the new era of AI and intelligent machines, deep learning is shaping our world like no other computing model in history.

Interactive speech, visual search, and video recommendations are a few of many AI-based services that we use every day.

Accuracy and responsiveness are key to user adoption for these services. As deep learning models increase in accuracy and complexity, CPUs are no longer capable of delivering a responsive user experience.

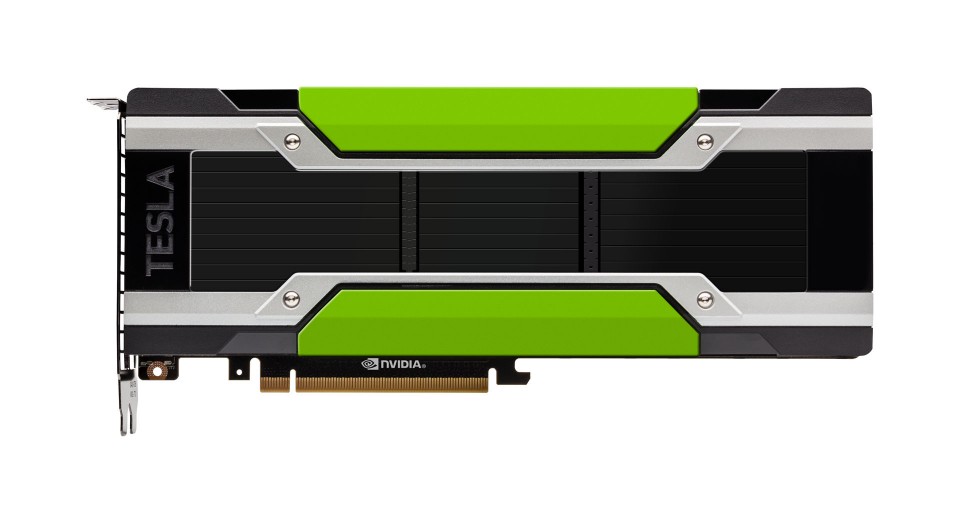

The NVIDIA Tesla P4 is powered by the revolutionary NVIDIA Pascal architecture and purpose-built to boost efficiency for scale-out servers running deep learning workloads, enabling smart responsive AI-based services.

It slashes inference latency by 15x in any hyperscale infrastructure and provides an incredible 60x better energy efficiency than CPUs.

This unlocks a new wave of AI services previous impossible due to latency limitations.

| Part No. | TESLA P4 |

|---|---|

| Manufacturer | nvidia |

| End of Life? | No |

| GPU Architecture | NVIDIA Pascal |

| Maximum Power Consumption | 50W/75W |

| Integer Operations (INT8) | 22 TeraOPS (TOPS) |

| ECC Protection | Yes |

| GPU Memory | 8 GB |

| Memory Bandwidth | 192 GB/s |

| Single-Precision Performance | 5.5 TeraFLOPS |

| PCI Slot(s) | Low-Profile PCIe |

| Hardware-Accelerated Video Engine | 1x Decode Engine, 2x Encode Engine |