Details

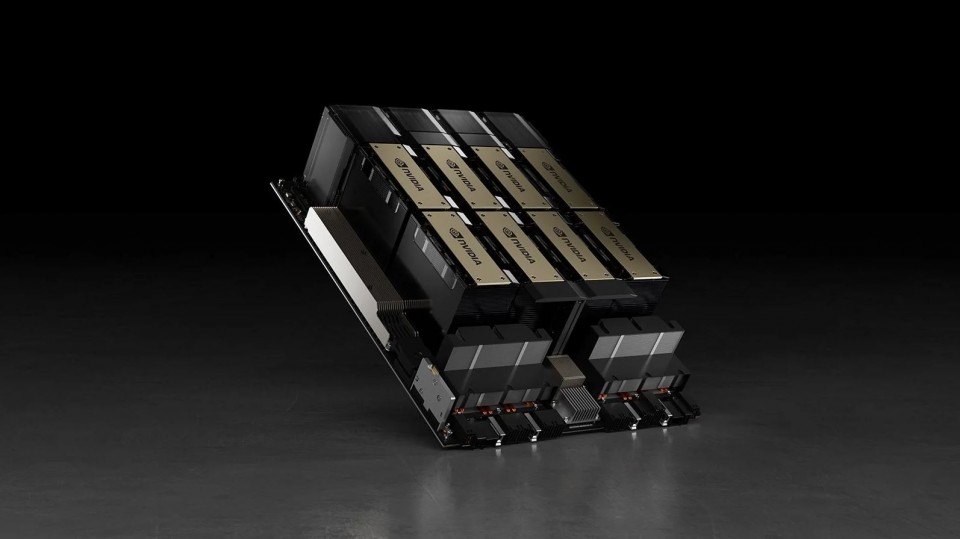

The realisation of scalable AI

Powered by NVIDIA Hopper, a single H200 Tensor Core GPU offers the performance of over 130 CPUs—enabling researchers to tackle challenges that were once unsolvable. The H200 has repeated it's win for MLPerf, the first industry-wide AI benchmark, validating itself as the world’s most powerful, scalable, and versatile computing platform.

Every industry wants intelligence. Within their ever-growing lakes of data lie insights that can provide the opportunity to revolutionise entire industries: personalised cancer therapy, predicting the next big hurricane, and virtual personal assistants conversing naturally.

These opportunities can become a reality when data scientists are given the tools they need to realize their life's work.

The NVIDIA Hopper H200 is the world's most advanced data GPU ever built to accelerate highly parallelised workloads, Artificial-Intelligence, Machine and Deep Learning. For graphics it pushes the latest rendering technology DLSS (deep learning super-sampling), ray-tracing, and ground truth AI graphics.

Hopper - 5th Generation Tensor Cores

First introduced in the NVIDIA Volta architecture, NVIDIA Tensor Core technology has brought dramatic speedups to AI, bringing down training times from weeks to hours and providing massive acceleration to inference. The NVIDIA Ampere architecture builds upon these innovations by bringing new precisions -Tensor Float (TF32) and Floating Point 64 (FP64) - to accelerate and simplify AI adoption and extend the power of Tensor Cores to HPC.

By pairing CUDA cores and Tensor Cores within a unified architecture, a single server with H200 GPUs can replace hundreds of commodity CPU servers for traditional HPC and deep learning.

TENSOR CORE

Equipped with 528 Tensor Cores, the H200 delivers 1000 (FP16) teraFLOPS (TFLOPS), 500 (FP16) TFLOPS of deep learning performance.

4th Generation NVLink

Scaling applications across multiple GPUs requires extremely fast movement of data. The forth generation of NVIDIA NVLink in H200 doubles the GPU-to-GPU direct bandwidth to 900 gigabytes per second (GB/s), almost 10X higher than PCIe Gen3. When paired with the latest generation of NVIDIA NVSwitch, all GPUs in the server can talk to each other at full NVLink speed for incredibly fast data transfers.

MAXIMUM EFFICIENCY MODE

The new maximum efficiency mode allows data centres to achieve up to 40% higher compute capacity per rack within the existing power budget.

In this mode, the H200 runs at peak processing efficiency, providing up to 80% of the performance at half the power consumption.

HBM3

The H200 provides massive amounts of compute to data centres. To keep the GPU compute engines fully utilised, it has a leading class 3 terabytes per second (TB/sec) of memory bandwidth, a 67 percent increase over the previous generation.

In addition, the H200 has significantly more on-chip memory, including a 40 megabyte (MB) level 2 cache—7X larger than the previous generation—to maximise compute performance.

PROGRAMMABILITY

The H200 is architected from the ground up to simplify programmability. Its new independent thread scheduling enables finer-grain synchronisation and improves GPU utilisation by sharing resources among small jobs.

600+ GPU-ACCELERATED APPLICATIONS

The Hopper H200 is the flagship product of the NVIDIA data centre platform for deep learning, HPC, and graphics.

The platform accelerates over 600 HPC applications and every major deep learning framework. It's available everywhere, from desktops to servers to cloud services, delivering both dramatic performance gains and cost-savings opportunities.

- Amber

- ANSYS Fluent

- Gaussian

- Gromacs

- LD-DYNA

- NAMD

- OpenFOAM

- Simulia Abaqus

- VASP

- WRF

| Part No. | H200-SXM5 |

|---|---|

| Manufacturer | nvidia |

| End of Life? | No |

| Preceeded By | H100 |

| Form Factor | SXM5 |

| GPU Architecture | NVIDIA, Hopper, VOLTA, CUDA, DirectCompute, OpenCL, OpenACC |

| Maximum Power Consumption | 700W |

| Thermal Solution | Passive |

| NVIDIA CUDA Cores | 16896 |

| NVIDIA Tensor Cores | 528 |

| NVIDIA NVLink | NVLink 4 18 Links (900GB/sec) |

| Compute APIs | CUDA, DirectCompute, OpenCL™, OpenACC |

| ECC Protection | Yes |

| GPU Memory | 141GB |

| Memory Interface | HBM3 |

| Memory Bandwidth | 4.8TB/s |

| Double-Precision Performance | 30 TFLOPs |

| Single-Precision Performance | 60 TFLOPs |

| Tensor Performance | 2000 TOPs INT8 Tensor |