Details

Welcome to the era of AI

Powered by NVIDIA Volta™, a single V100S Tensor Core GPU offers the performance of nearly 32 CPUs—enabling researchers to tackle challenges that were once unsolvable. The V100S won MLPerf, the first industry-wide AI benchmark, validating itself as the world’s most powerful, scalable, and versatile computing platform.

Every industry wants intelligence. Within their ever-growing lakes of data lie insights that can provide the opportunity to revolutionize entire industries: personalized cancer therapy, predicting the next big hurricane, and virtual personal assistants conversing naturally.

These opportunities can become a reality when data scientists are given the tools they need to realize their life's work.

NVIDIA Tesla V100S is the world's most advanced data GPU ever built to accelerate AI, HPC, and graphics.

Powered by NVIDIA Volta, the latest GPU architecture, Tesla V100s offers the performance of 100 CPUs in a single GPU - enabling data scientists, researchers, and engineers to tackle challenges that were once impossible.

VOLTA ARCHITECTURE

By pairing CUDA cores and Tensor Cores within a unified architecture, a single server with V100 GPUs can replace hundreds of commodity CPU servers for traditional HPC and deep learning.

TENSOR CORE

Equipped with 640 Tensor Cores, V100 delivers 130 teraFLOPS (TFLOPS) of deep learning performance.

That’s 12X Tensor FLOPS for deep learning training, and 6X Tensor FLOPS for deep learning inference when compared to NVIDIA Pascal™ GPUs.

NEXT-GENERATION NVLINK

NVIDIA NVLink in V100 delivers 2X higher throughput compared to the previous generation.

Up to eight V100 accelerators can be interconnected at up to gigabytes per second (GB/sec) to unleash the highest application performance possible on a single server.

MAXIMUM EFFICIENCY MODE

The new maximum efficiency mode allows data centers to achieve up to 40% higher compute capacity per rack within the existing power budget.

In this mode, V100 runs at peak processing efficiency, providing up to 80% of the performance at half the power consumption.

HBM2

With a combination of improved raw bandwidth of 900GB/s and higher DRAM utilization efficiency at 95%, V100 delivers 1.5X higher memory bandwidth over Pascal GPUs as measured on STREAM.

V100 is now available in a 32GB configuration that doubles the memory of the standard 16GB offering.

PROGRAMMABILITY

V100 is architected from the ground up to simplify programmability. Its new independent thread scheduling enables finer-grain synchronization and improves GPU utilization by sharing resources among small jobs.

600+ GPU-ACCELERATED APPLICATIONS

V100 is the flagship product of the NVIDIA data center platform for deep learning, HPC, and graphics.

The platform accelerates over 600 HPC applications and every major deep learning framework. It's available everywhere, from desktops to servers to cloud services, delivering both dramatic performance gains and cost-savings opportunities.

- Amber

- ANSYS Fluent

- Gaussian

- Gromacs

- LD-DYNA

- NAMD

- OpenFOAM

- Simulia Abaqus

- VASP

- WRF

| Part No. | 900-2G500-0040-000 G |

|---|---|

| Manufacturer | nvidia |

| End of Life? | No |

| Preceeded By | v100 |

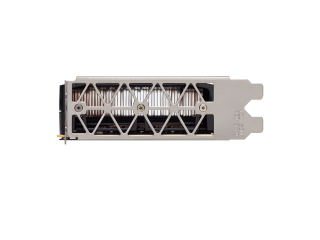

| Form Factor | PCIe Full Height/Length |

| GPU Architecture | NVIDIA VOLTA, CUDA, DirectCompute, OpenCL, OpenACC |

| Maximum Power Consumption | 250 W |

| Thermal Solution | Passive |

| NVIDIA CUDA Cores | 5120 |

| NVIDIA Tensor Cores | 640 |

| NVIDIA NVLink | 8 TESLA V100s supported |

| Performance | 130 TFLOPS |

| Compute APIs | CUDA, DirectCompute, OpenCL™, OpenACC |

| ECC Protection | No |

| GPU Memory | 32GB HBM2 |

| Memory Interface | ECC |

| Memory Bandwidth | 1134 GB/sec |

| Double-Precision Performance | 8.2 TeraFLOPS |

| Single-Precision Performance | 16.4 TeraFLOPS |

| Tensor Performance | 130 TFLOPS |

| PCI Slot(s) | PCIe Gen3 |